A year of fedmsg in Debian

Nicolas Dandrimont

olasd@debian.org

August 24th 2014

The problem with distro infra

Services are like people

Dozens of services, developed by many people

Each service has its own way of communicating with the rest of the world

Meaning that a service that needs to interact (yep, that's all of them) will need to implement a bunch of communication systems

Debian infrastructure comms

dak, our archive software, uses emails and databases to communicate. Metadata is available in a RFC822 format, no real API (the DB is not public either)

wanna-build, our build queue management software, polls a database every few minutes to know what needs to get built. No API outside of its database

debbugs, our bug tracking system, works via email, stores its data in flat files (for now), and exposes a read-only SOAP API

SCM pushes in the distro-provided repos (on alioth) can trigger an IRC bot (KGB), some mails. No central notification mechanism

Some kludges are available

UDD (the Ultimate Debian Database)

Contains a snapshot of a lot of the databases undelying the Debian infrastructure at a given time (even some Ubuntu bits)

A lot of cron-triggered importers

Useful for distro-wide QA purposes, not so much for real-time updates. Furthermore, consistency is not guaranteed

The PTS (Package Tracking System)

https://packages.qa.debian.org/ (and https://tracker.debian.org)

Cron-triggered updates on source packages

Subscribe for email updates on a given packages

Messages not uniform, contain some headers for filtering and machine parsing

Not real-time

A proposed improvement: fedmsg

What is fedmsg?

Jesse Keating's talk in 2009 at NorthWest Linux Fest

A unified message bus, reducing the coupling of interdependent services

Services subscribe to one (or several) message topic(s), register callbacks, and respond to events

What you get (almost) for free

- A stream of data representing the infrastructure activity (stats)

- De-coupling of interdependent services

- A unified, pluggable notification system, gathering all the events in the project

- irc

- mobile

- desktop

- …

- badge/achievement system

- live web dashboard of events

- IRC feeds (#debian-fedmsg @ OFTC)

- identi.ca and twitter accounts that get banned for flooding :-)

- …

fedmsg inception

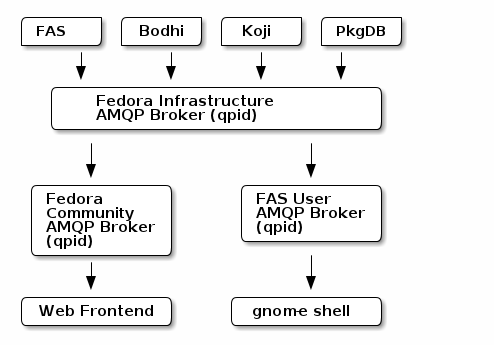

First candidate: AMQP as implemented by qpid

Issues:

- SPOF at the central broker

- brokers weren't very reliable

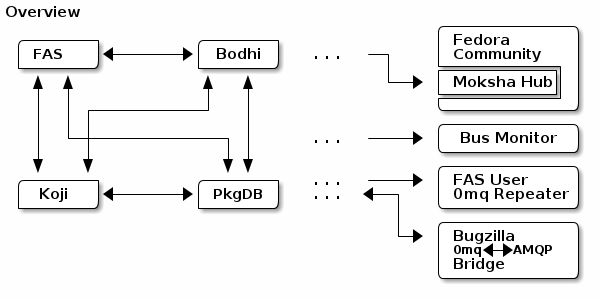

Actual message bus: 0mq

No broker, each service binds to a port and starts publishing messages

Other services connect to those ports and start consuming messages

Advantages

- No central broker

- 100-fold speedup over AMQP

Main issue with a brokerless system: service discovery

Three options

- Writing a broker (→ hello SPOF)

- Using DNS (most elegant solution)

- Distribute a text file

Simon Chopin implemented the DNS solution during his GSoC.

Using the bus

Bus topology

Message topics

Event topics follow the rule:

org.distribution.ENV.SERVICE.OBJECT[.SUBOBJECT].EVENT

Where:

- ENV is one of dev, stg, or production.

- SERVICE is something like koji, bodhi, mentors, …

- OBJECT is something like package, user, or tag

- SUBOBJECT is something like owner or build (in the case where OBJECT is package, for instance)

- EVENT is a verb like update, create, or complete.

Publishing messages

From python:

import fedmsg fedmsg.publish(topic='testing', modname='test', msg={ 'test': "Hello World", })

From the shell:

$ echo "Hello World." | fedmsg-logger --modname=git --topic=repo.update $ echo '{"a": 1}' | fedmsg-logger --json-input $ fedmsg-logger --message="This is a message." $ fedmsg-logger --message='{"a": 1}' --json-input

Receiving messages

From python:

import fedmsg config = fedmsg.config.load_config([], None) for name, endpoint, topic, msg in fedmsg.tail_messages(**config): print fedmsg.encoding.pretty_dumps(msg)

In the shell, you can use the fedmsg-tail command

Goodies

- All the stuff listed in What you get (almost) for free is implemented

- Cryptographic message signing: either via X.509 (Fedora) or GnuPG (Debian, implemented during GSoC13)

- Replay mechanism: detect if a message was missed (sequence id mismatch) and ask the sender for the remaining messages (implemented during GSoC13)

fedmsg in Debian

GSoC 2013

A lot happened during GSoC 2013, thanks to Simon Chopin's involvement:

- fedmsg (and dependencies) packaging

- adaptations to make fedmsg more distribution-agnostic

- bootstrap of the Debian bus using mailing-list subscriptions, and on mentors.d.n

What then?

- Packaging adaptations to make it easier to run the fedmsg components individually (separate packages, init scripts and systemd service files).

- Proper package backports

- Bus maintenance: keeping the software up to date

Some stats

Since July 14th 2013:

- around 200k messages

- 155k bug mails

- 45k uploads

Latest developments

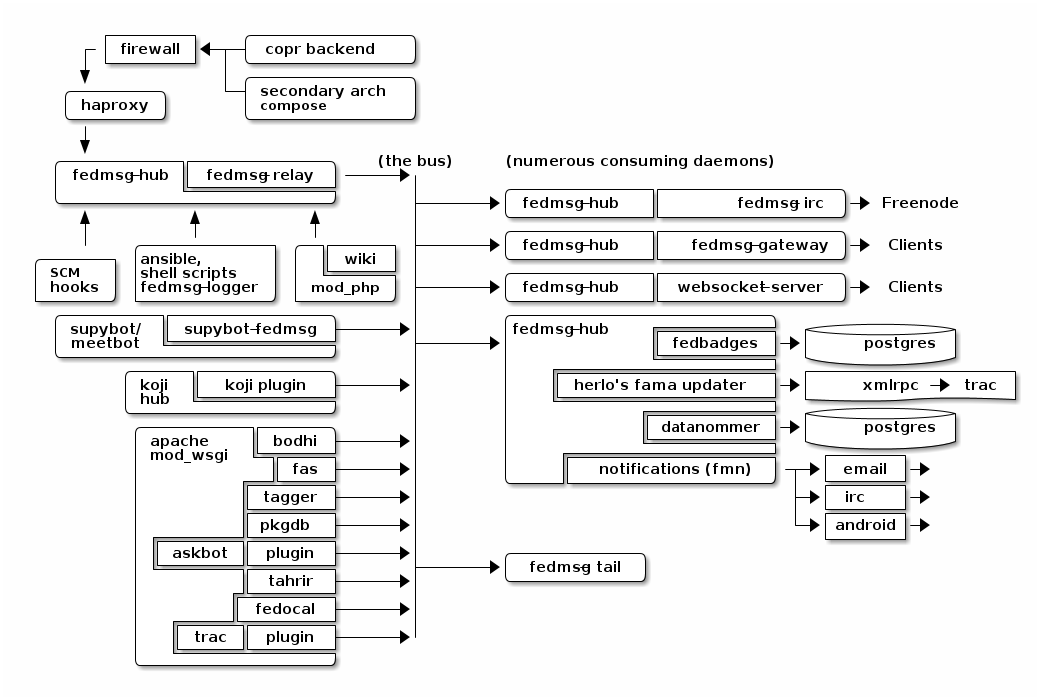

Datanommer (database component) has been packaged and is being deployed.

Debian services are widely distributed, firewall restrictions are sometimes our of our control: we need a way for services to push their messages instead of having the fedmsg-gateway pull them.

fedmsg-relay: non-standard port, non-standard protocol.

Currently pondering a way for services to push their messages through https.

Conclusions & Questions

Contact me

olasd@debian.org

If there's interest, I'd be glad to hold an ad-hoc hacking session!